Three Papers at Eurographics 2026

We are happy to announce that three papers from our research group have been accepted to Eurographics 2026.

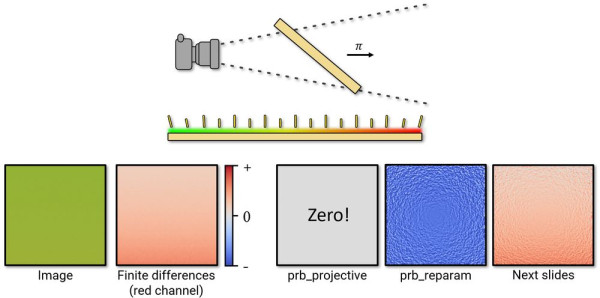

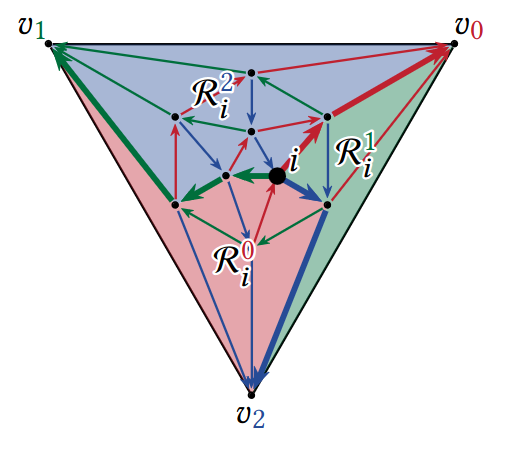

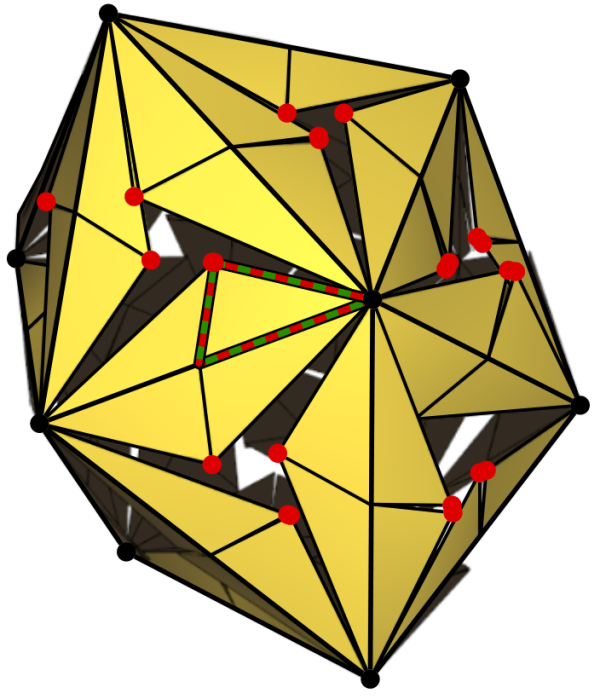

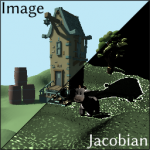

"Contouring Signed Distance Fields by Approximating Gradients" improves contouring by approximating gradient information using a tessellation. "Hierarchical Optimization of the As-Rigid-As-Possible Energy" introduces a multi-level optimization of the ARAP energy for surface deformation. "Basis Networks: Learning basis functions for free-form triangulations" improves surface smoothness for neural surface representations by learning basis functions from mesh examples.